BaDExpert: Extracting Backdoor Functionality for Accurate Backdoor Input Detection

[pdf] Preprint (Under Review), 2023

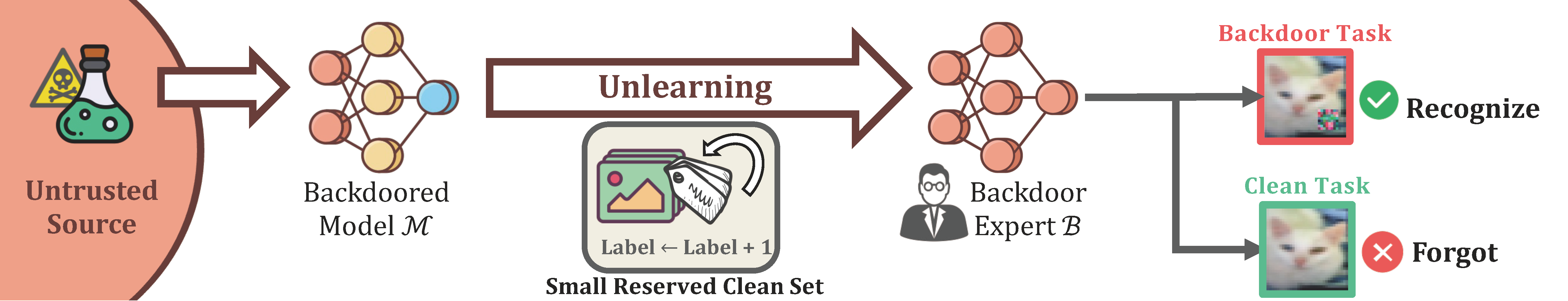

To defense against backdoor attack, we introduce a novel approach that directly extracts the backdoor functionality to a backdoor expert model (see the figure below), as opposed to extracting backdoor triggers.

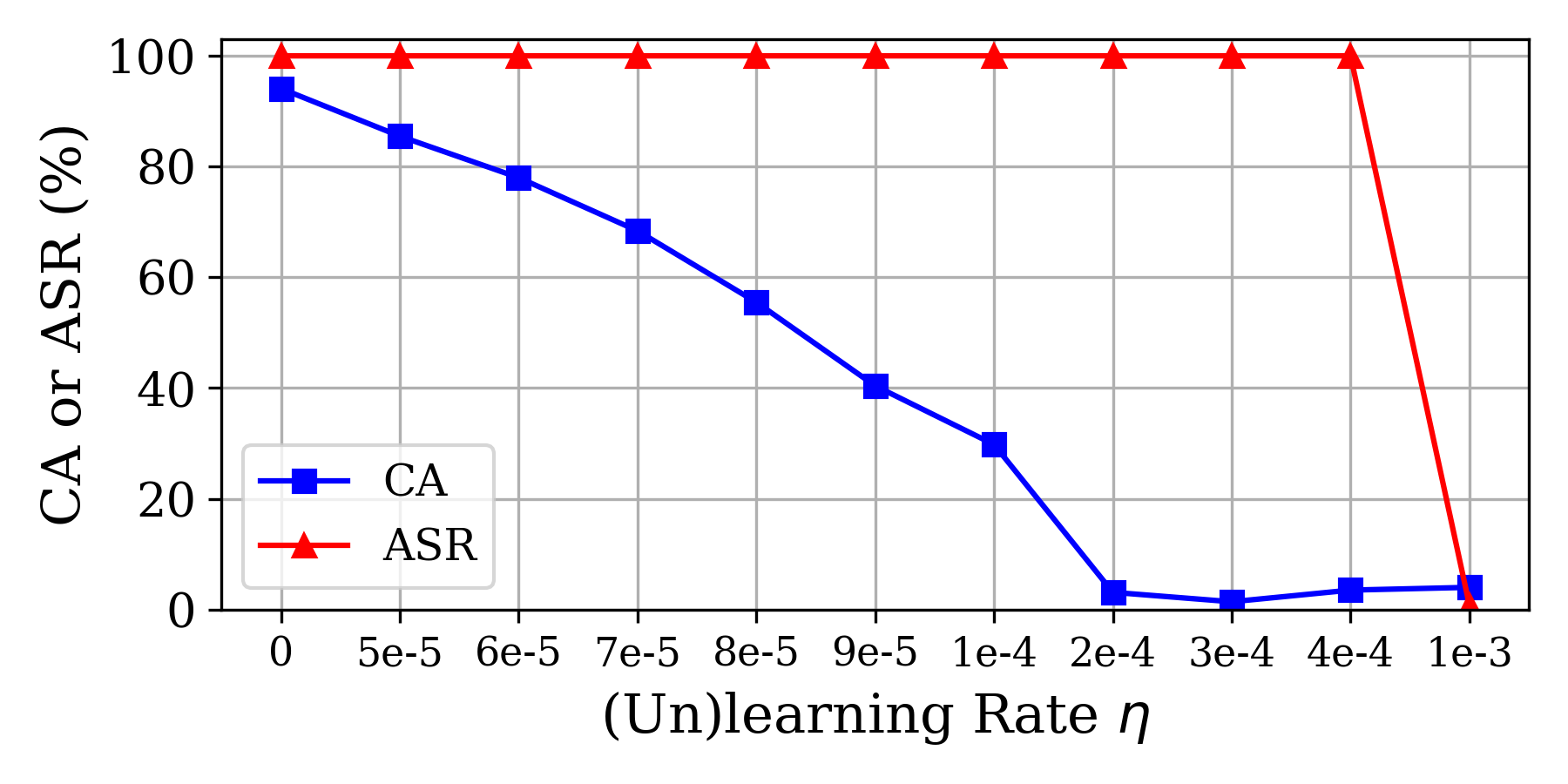

Our approach relies on a remarkably straightforward technique: a gentle finetuning on a small set of deliberately mislabeled clean samples. The reasoning behind this technique lies in an intriguing characteristic of backdoored models: finetuning a backdoored model on a few mislabeled clean samples can cause the model to forget its regular functionality, resulting in low clean accuracy, but remarkably, its backdoor functionality remains intact, leading to a high attack success rate as seen in the figure below.

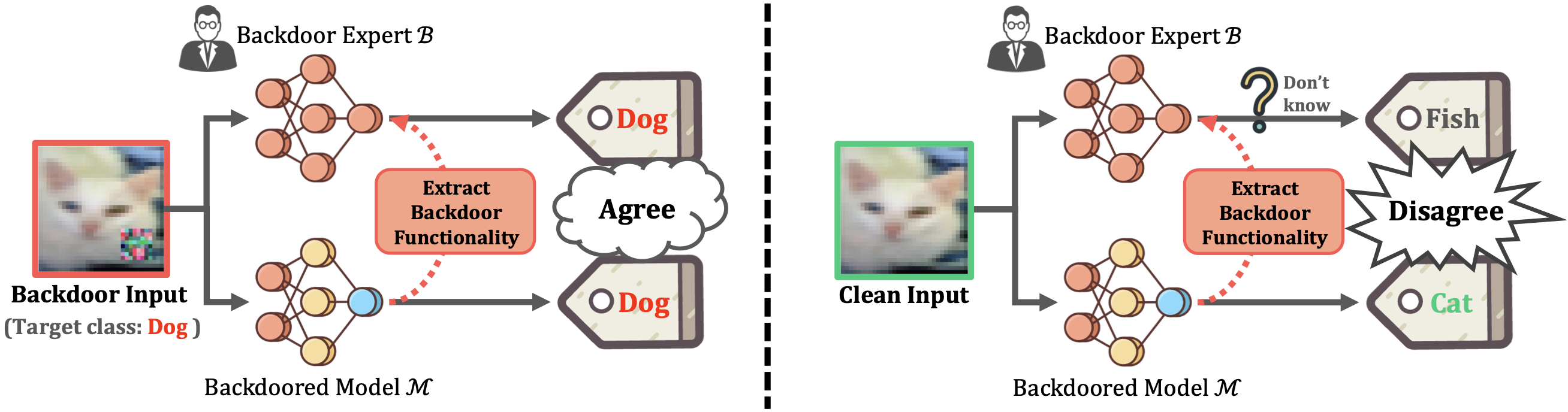

The resultant model (dubbed backdoor expert model) serves a critical function, providing a tool that can be subsequently utilized to shield the original model from backdoor attacks. Particularly, we demonstrate that it is feasible to devise a highly accurate backdoor input filter using a backdoor expert model, of which the high-level intuition is illustrated in the figure below. In practice, the efficacy of this approach is further amplified by a more fine-grained design with an ensembling strategy (see our paper for more details).